Last updated: May 1, 2026

Is the language you already know actually the right one for your next scraping project? That instinct to reach for the familiar tool is understandable – but when it comes to PHP vs Python web scraping, the choice has real consequences for how long your script takes to write, how far it scales, and how much pain you absorb when the target site changes its structure. Here is the practical breakdown.

The Case for PHP: Embedded, Familiar, Surprisingly Capable

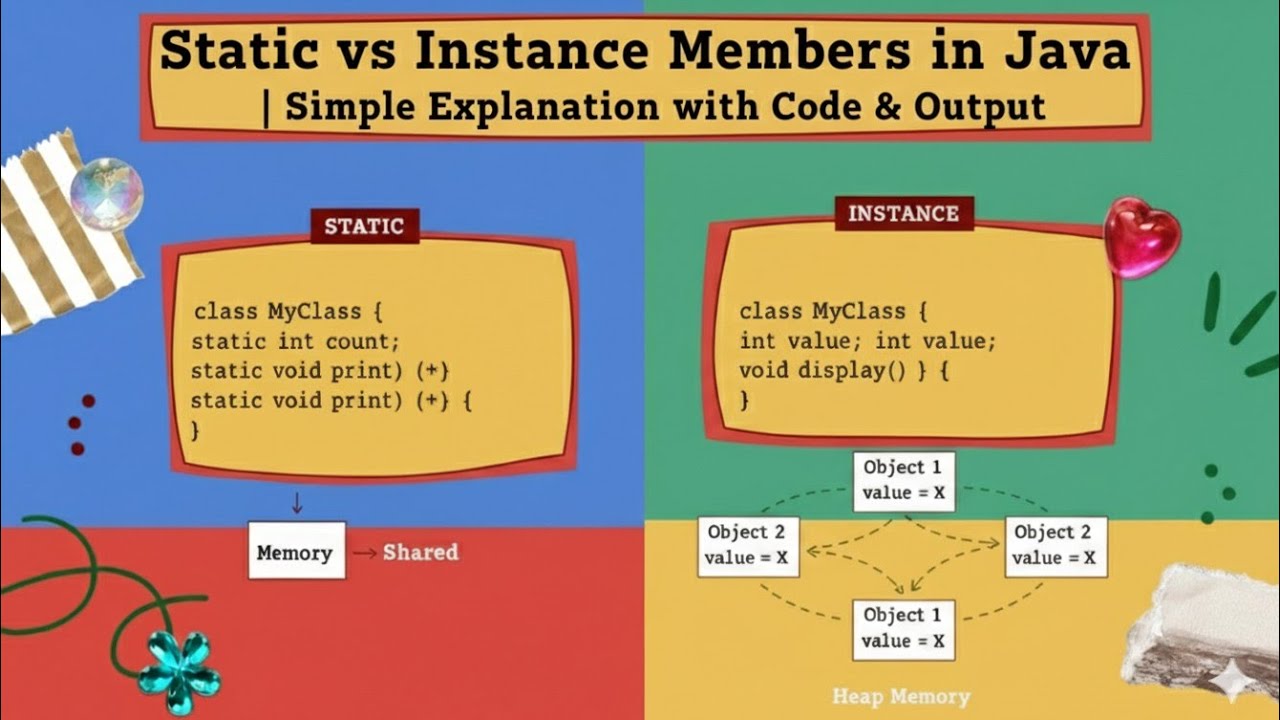

Image: Oxylabs

PHP is a legitimate scraping option – particularly when the scraping task lives inside an existing PHP application. That covers a lot of ground. PHP powers roughly 71% of all websites with a known server-side language, which means a huge portion of developers already have it running in their stack. Adding a scraper inside a WordPress plugin or a Laravel application is operationally simpler than introducing a second runtime.

PHP handles HTTP natively through cURL, and the ecosystem does include capable libraries. Guzzle manages HTTP requests with a clean interface. Symfony DomCrawler lets you traverse HTML with CSS selectors and XPath (a query language for navigating XML and HTML documents). Between them, you can fetch a page and extract structured data without much ceremony – if you already know the language.

The catch is verbosity. A basic scraping task in PHP – fetch a page, parse the HTML, extract a single element – requires cURL initialisation, option-setting, response handling, DOMDocument instantiation, and XPath traversal. That is five or six distinct steps before you have extracted anything. Not difficult, just noisy. And PHP’s ecosystem was shaped by web development needs, not data science, so library support for anything beyond basic parsing thins out quickly.

The Case for Python: Purpose-Built for Extraction

Python is the dominant language in the data collection space for a simple reason: its core scraping libraries were designed from the ground up for extraction workflows. Requests handles HTTP fetching in a single readable line. BeautifulSoup parses HTML with an intuitive API. Scrapy provides a full crawling framework with built-in concurrency, pipelines, and middleware [citation needed] – the kind of infrastructure that takes weeks to bolt onto PHP.

The syntax matters too. Python reads close to plain English, which lowers the barrier to entry for writing and maintaining scraping scripts. A team that inherits a Python scraper six months after it was written can usually follow the logic without a guide. That is not always true of PHP’s cURL boilerplate.

Python’s broader ecosystem extends the advantage further. For a scraping task that graduates into data analysis, visualisation, or machine learning, the same environment handles all of it. Libraries like Pandas (data manipulation), Playwright (browser automation), and Celery (task queuing) integrate naturally. The pipeline from raw HTML to structured insight is genuinely shorter.

PHP vs Python Web Scraping: Where the Real Differences Show

For simple tasks, Python wins on ergonomics; for embedded tasks inside PHP applications, PHP wins on integration. That is the clean split. But in 2026, most scraping projects are not simple, and the language choice is rarely the biggest decision you will face.

Modern websites increasingly rely on JavaScript rendering to deliver their content. A static HTTP request – regardless of whether it comes from PHP or Python – returns an empty shell. Anti-bot systems have grown aggressive enough that rotating user agents and spoofing headers is table stakes, not an edge case. The infrastructure layer – proxy management, retry logic, rate limiting, job orchestration, and storage – is harder and more time-consuming than the parsing logic itself. Three things determine whether a large-scale scraper works: reliable fetching, robust orchestration, and sensible data modelling. Language syntax is a distant fourth.

Most serious scraping setups in 2026 use specialised layers rather than a single monolithic tool: one service for fetching (often a managed proxy or headless browser service), another for parsing, and a third for orchestration. At that point, the language used for the parsing layer matters less than its library support and your team’s familiarity with it. Web performance at the infrastructure level – caching, connection management, efficient storage – becomes as important as any scraping logic. Our website speed optimisation guide covers some of the underlying principles that apply here too.

Which Should You Actually Choose?

Pick PHP if your scraping task is embedded inside an existing PHP application and the scope is bounded – a product importer, a competitor price checker, a feed aggregator inside a CMS. Introducing Python for a small, self-contained task creates deployment overhead and a second runtime to maintain. PHP with Guzzle and DomCrawler will handle it cleanly.

Pick Python if you are building a standalone scraper, working at scale, or extracting data that feeds into analysis or reporting. The library ecosystem, the cleaner syntax, and the community around scraping-specific tooling make Python the pragmatic choice for anything beyond basic integration tasks. If the project will grow – more targets, more fields, more frequency – start with Python. The operational cost of switching later is high.

The myth worth busting: that Python is always overkill for small jobs, or that PHP “can’t really do scraping.” Both languages can fetch and parse HTML. The question is which one costs less to write, extend, and maintain for your specific context. For most standalone scraping projects in 2026, that answer is Python. For scraping that lives inside a larger PHP application, the answer is the language already running there.

For developers building applications that consume structured data – whether scraped, pulled from APIs, or managed through a headless CMS – the architecture around the data matters as much as how it is collected.

If you are building a web application that needs custom data integration, automated content pipelines, or bespoke scraping functionality, DRS Web Development builds custom websites and web applications for businesses of all sizes. Get in touch at drs-web.co.uk/contact for a free consultation.

Frequently Asked Questions

Q: Is Python better than PHP for web scraping?

A: For most standalone scraping projects, yes. Python’s purpose-built libraries (Requests, BeautifulSoup, Scrapy) reduce boilerplate significantly, and its broader data ecosystem makes it easier to extend a scraper into analysis or automation workflows. PHP is a better fit when the scraping task is embedded inside an existing PHP application.

Q: Can PHP scrape JavaScript-rendered websites?

A: Not natively. Like Python’s standard HTTP libraries, PHP’s cURL and Guzzle only retrieve the initial HTML response – they do not execute JavaScript. Both languages require a headless browser tool (such as Playwright or Puppeteer, typically driven through Node.js) or a third-party rendering service to handle JavaScript-heavy sites.

Q: What is the main advantage of Python for web scraping in 2026?

A: Python’s ecosystem was purpose-built for data extraction. Libraries like Scrapy provide full crawling frameworks out of the box, and Python integrates naturally with data analysis tools like Pandas. This makes it the dominant choice for scraping projects that need to scale or feed into downstream data workflows.

Q: Does language choice matter at large scraping scale?

A: Less than you might expect. At scale, the hard problems are infrastructure – proxy management, retry logic, rate limiting, job orchestration, and storage. Both PHP and Python can handle the parsing layer; the language matters most for developer ergonomics and ecosystem support, not raw performance.

Q: Is PHP good enough for simple scraping tasks?

A: Yes. With Guzzle for HTTP requests and Symfony DomCrawler for HTML parsing, PHP handles straightforward scraping tasks competently. The main trade-off is more verbose code compared to Python’s equivalent. For scraping embedded inside a PHP application, it is often the most practical choice.

Source: https://oxylabs.io/blog/php-vs-python

This article was researched and written with AI assistance, then reviewed for accuracy and quality. Riya Shah uses AI tools to help produce content faster while maintaining editorial standards.

Need help with your web project?

From one-day launches to full-scale builds, DRS Web Development delivers modern, fast websites.